If you’re responsible for the growth of an ecommerce site, then you will already appreciate that as exciting as what’s coming is, it’s difficult to get a total grip on. This guide is intended to pick out the most important elements to focus your attention on, during this coming year. The past year’s developments, from a vibrant open-source landscape to sophisticated multimodal models, have laid the groundwork for significant advances.

But although generative AI continues to captivate the tech world, attitudes are becoming more nuanced and mature as organisations shift their focus from experimentation to real-world initiatives. This year’s trends reflect a deepening sophistication and caution in AI development and deployment strategies, with an eye to ethics, safety and the evolving regulatory landscape.

Here are the top 10 AI and machine learning trends to prepare for in 2024.

1. Multimodal AI

Multimodal AI goes beyond traditional single-mode data processing to encompass multiple input types, such as text, images and sound — a step toward mimicking the human ability to process diverse sensory information.

“The interfaces of the world are multimodal,” said Mark Chen, head of frontiers research at OpenAI, in a November 2023 presentation at the conference EmTech MIT. “We want our models to see what we see and hear what we hear, and we want them to also generate content that appeals to more than one of our senses.”

The multimodal capabilities in OpenAI’s GPT-4 model enable the software to respond to visual and audio input. In his talk, Chen gave the example of taking photos of the inside of a fridge and asking ChatGPT to suggest a recipe based on the ingredients in the photo. The interaction could even encompass an audio element if using ChatGPT’s voice mode to pose the request aloud.

Although most generative AI initiatives today are text-based, “the real power of these capabilities is going to be when you can marry up text and conversation with images and video, cross-pollinate all three of those, and apply those to a variety of businesses,” said Matt Barrington, Americas emerging technologies leader at EY.

Multimodal AI’s real-world applications are diverse and expanding. In healthcare, for example, multimodal models can analyse medical images in light of patient history and genetic information to improve diagnostic accuracy. At the job function level, multimodal models can expand what various employees can do by extending basic design and coding capabilities to individuals without a formal background in those areas.

“I can’t draw to save my life,” Barrington said. “Well, now I can. I’m decent with language, so … I can plug into a capability like image generation, and some of those ideas that were in my head that I could never physically draw, I can have AI do.”

Moreover, introducing multimodal capabilities could strengthen models by offering them new data to learn from. “As our models get better and better at modelling language and start to hit the limits of what they can learn from language, we want to provide the models with raw inputs from the world so that they can perceive the world on their own and draw their inferences from things like video or audio data,” Chen said.

2. Agentic AI

Agentic AI marks a significant shift from reactive to proactive AI. AI agents are advanced systems that exhibit autonomy, proactivity and the ability to act independently. Unlike traditional AI systems, which mainly respond to user inputs and follow predetermined programming, AI agents are designed to understand their environment, set goals and act to achieve those objectives without direct human intervention. Autonomous solutions will be leading by example throughout at least the next decade.

For example, in environmental monitoring, an AI agent could be trained to collect data, analyse patterns and initiate preventive actions in response to hazards such as early signs of a forest fire. Likewise, a financial AI agent could actively manage an investment portfolio using adaptive strategies that react to changing market conditions in real-time.

“2023 was the year of being able to chat with an AI,” wrote computer scientist Peter Norvig, a fellow at Stanford’s Human-Centered AI Institute, in a recent blog post. “In 2024, we’ll see the ability for agents to get stuff done for you. Make reservations, plan a trip, connect to other services.”

In addition, combining agentic and multimodal AI could open up new possibilities. In the aforementioned presentation, Chen gave the example of an application designed to identify the contents of an uploaded image. Previously, someone looking to build such an application would have needed to train their image recognition model and then figure out how to deploy it. But with multimodal, agentic models, this could all be accomplished through natural language prompting.

3. Open source AI

Building large language models and other powerful generative AI systems is an expensive process that requires enormous amounts of computing and data. But using an open-source model enables developers to build on top of others’ work, reducing costs and expanding AI access. Open-source AI is publicly available, typically for free, enabling organisations and researchers to contribute to and build on existing code.

GitHub data from the past year shows a remarkable increase in developer engagement with AI, particularly generative AI. In 2023, generative AI projects entered the top 10 most popular projects across the code hosting platform for the first time, with projects such as Stable Diffusion and AutoGPT pulling in thousands of first-time contributors.

Early in the year, open-source generative models were limited in number, and their performance often lagged behind proprietary options such as ChatGPT. However, the landscape broadened significantly throughout 2023 to include powerful open-source contenders such as Meta’s Llama 2 and Mistral AI’s Mixtral models. This could shift the dynamics of the AI landscape in 2024 by providing smaller, less-resourced entities with access to sophisticated AI models and tools that were previously out of reach.

“It gives everyone easy, fairly democratised access, and it’s great for experimentation and exploration,” Barrington said.

Open source approaches can also encourage transparency and ethical development, as more eyes on the code means a greater likelihood of identifying biases, bugs and security vulnerabilities. But experts have also expressed concerns about the misuse of open-source AI to create disinformation and other harmful content. In addition, building and maintaining open source is difficult even for traditional software, let alone complex and compute-intensive AI models.

4. Retrieval-augmented generation

Although generative AI tools were widely adopted in 2023, they continue to be plagued by the problem of hallucinations: plausible-sounding but incorrect responses to users’ queries. This limitation has presented a roadblock to enterprise adoption, where hallucinations in business-critical or customer-facing scenarios could be catastrophic. Retrieval-augmented generation (RAG) has emerged as a technique for reducing hallucinations, with potentially profound implications for enterprise AI adoption.

RAG blends text generation with information retrieval to enhance the accuracy and relevance of AI-generated content. It enables LLMs to access external information, helping them produce more accurate and contextually aware responses. Bypassing the need to store all knowledge directly in the LLM also reduces model size, which increases speed and lowers costs.

“You can use RAG to go gather a ton of unstructured information, documents, etc., [and] feed it into a model without having to fine-tune or custom-train a model,” Barrington said.

These benefits are particularly enticing for enterprise applications where up-to-date factual knowledge is crucial. For example, businesses can use RAG with foundation models to create more efficient and informative chatbots and virtual assistants.

5. Customised enterprise generative AI models

Massive, general-purpose tools such as Midjourney and ChatGPT have attracted the most attention among consumers exploring generative AI. But for business use cases, smaller, narrow-purpose models could prove to have the most staying power, driven by the growing demand for AI systems that can meet niche requirements.

While creating a new model from scratch is a possibility, it’s a resource-intensive proposition that will be out of reach for many organisations. To build customised generative AI, most organisations instead modify existing AI models — for example, tweaking their architecture or fine-tuning a domain-specific data set. This can be cheaper than either building a new model from the ground up or relying on API calls to a public LLM.

“Calls to GPT-4 as an API, just as an example, are very expensive, both in terms of cost and in terms of latency — how long it can take to return a result,” said Shane Luke, vice president of AI and machine learning at Workday. “We are working a lot … on optimising so that we have the same capability, but it’s very targeted and specific. And so it can be a much smaller model that’s more manageable.”

The key advantage of customised generative AI models is their ability to cater to niche markets and user needs. Tailored generative AI tools can be built for almost any scenario, from customer support to supply chain management to document review. This is especially relevant for sectors with highly specialised terminology and practices, such as ecommerce, healthcare, finance and legal.

In many business use cases, the most massive LLMs are overkill. Although ChatGPT might be the state of the art for a consumer-facing chatbot designed to handle any query, “it’s not the state of the art for smaller enterprise applications,” Luke said.

Barrington expects to see enterprises exploring a more diverse range of models in the coming year as AI developers’ capabilities begin to converge. “We’re expecting, over the next year or two, for there to be a much higher degree of parity across the models — and that’s a good thing,” he said.

On a smaller scale, Luke has seen a similar scenario play out at Workday, which provides a set of AI services for teams to experiment with internally. Although employees started out using mostly OpenAI services, Luke said, he’s gradually seen a shift toward a mix of models from various providers, including Google and AWS.

In light of these privacy and security benefits, stricter AI regulation in the coming years could push organisations to focus their energies on proprietary models, explained Gillian Crossan, risk advisory principal and global technology sector leader at Deloitte.

“It’s going to encourage enterprises to focus more on private models that are proprietary, that are domain-specific, rather than focus on these large language models that are trained with data from all over the internet and everything that brings with it,” she said.

6. Need for AI and machine learning talent

Designing, training and testing a machine learning model is no easy feat — much less pushing it to production and maintaining it in a complex organisational IT environment. It’s no surprise, then, that the growing need for AI and machine learning talent is expected to continue into 2024 and beyond.

“The market is still really hot around talent,” Luke said. “It’s very easy to get a job in this space.”

In particular, as AI and machine learning become more integrated into business operations, there’s a growing need for professionals who can bridge the gap between theory and practice. This requires the ability to deploy, monitor and maintain AI systems in real-world settings — a discipline often referred to as MLOps, short for machine learning operations.

In a recent O’Reilly report, respondents cited AI programming, data analysis and statistics, and operations for AI and machine learning as the top three skills their organisations needed for generative AI projects. These types of skills, however, are in short supply. “That’s going to be one of the challenges around AI — to be able to have the talent readily available,” Crossan said.

In 2024, look for organisations to seek out talent with these types of skills – and not just big tech companies. With IT and data nearly ubiquitous as business functions and AI initiatives rising in popularity, building internal AI and machine learning capabilities is poised to be the next stage in digital transformation.

Crossan also emphasised the importance of diversity in AI initiatives at every level, from technical teams building models up to the board. “One of the big issues with AI and the public models is the amount of bias that exists in the training data,” she said. “And unless you have that diverse team within your organisation that is challenging the results and challenging what you see, you are going to potentially end up in a worse place than you were before AI.”

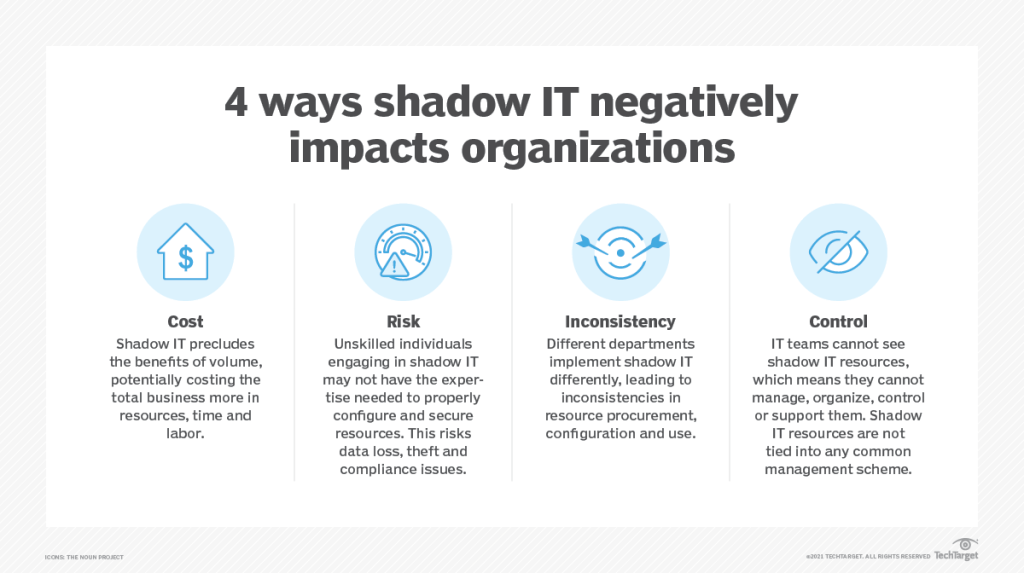

7. Shadow AI

As employees across job functions become interested in generative AI, organisations are facing the issue of shadow AI: the use of AI within an organisation without explicit approval or oversight from the IT department. This trend is becoming increasingly prevalent as AI becomes more accessible, enabling even nontechnical workers to use it independently.

Shadow AI typically arises when employees need quick solutions to a problem or want to explore new technology faster than official channels allow. This is especially common for easy-to-use AI chatbots, which employees can try out in their web browsers with little difficulty — without going through IT review and approval processes.

On the plus side, exploring ways to use these emerging technologies evinces a proactive, innovative spirit. But it also carries risk, since end users often lack relevant information on security, data privacy and compliance. For example, a user might feed trade secrets into a public-facing LLM without realising that doing so exposes that sensitive information to third parties.

“Once something gets out into these public models, you cannot pull it back,” Barrington said. “So there’s a bit of a fear factor and risk angle that’s appropriate for most enterprises, regardless of sector, to think through.”

In 2024, organisations will need to take steps to manage shadow AI through governance frameworks that balance supporting innovation with protecting privacy and security. This could include setting clear acceptable AI use policies and providing approved platforms, as well as encouraging collaboration between IT and business leaders to understand how various departments want to use AI.

“The reality is, everybody’s using it,” Barrington said, about recent EY research finding that 90% of respondents used AI at work. “Whether you like it or not, your people are using it today, so you should figure out how to align them to the ethical and responsible use of it.”

8. A generative AI reality check

As organisations progress from the initial excitement surrounding generative AI to actual adoption and integration, they’re likely to face a reality check in 2024 — a phase often referred to as the “trough of disillusionment” in the Gartner Hype Cycle.

“We’re seeing a rapid shift from what we’ve been calling this experimentation phase into asking, ‘How do I run this at scale across my enterprise?'” Barrington said.

As early enthusiasm begins to wane, organisations are confronting generative AI’s limitations, such as output quality, security and ethics concerns, and integration difficulties with existing systems and workflows. The complexity of implementing and scaling AI in a business environment is often underestimated, and tasks such as ensuring data quality, training models and maintaining AI systems in production can be more challenging than initially anticipated.

“It’s not very easy to build a generative AI application and put it into production in a real product setting,” Luke said.

The silver lining is that these growing pains, while unpleasant in the short term, could result in a healthier, more tempered outlook in the long run. Moving past this phase will require setting realistic expectations for AI and developing a more nuanced understanding of what AI can and can’t do. AI projects should be clearly tied to business goals and practical use cases, with a clear plan in place for measuring outcomes.

“If you have very loose use cases that are not clearly defined, that’s probably what’s going to hold you up the most,” Crossan said.

9. Increased attention to AI ethics and security risks

The proliferation of deepfakes and sophisticated AI-generated content is raising alarms about the potential for misinformation and manipulation in media and politics, as well as identity theft and other types of fraud. AI can also enhance the efficacy of ransomware and phishing attacks, making them more convincing, more adaptable and harder to detect.

Although efforts are underway to develop technologies for detecting AI-generated content, doing so remains challenging. Current AI watermarking techniques are relatively easy to circumvent, and existing AI detection software can be prone to false positives.

The increasing ubiquity of AI systems also highlights the importance of ensuring that they are transparent and fair — for example, by carefully vetting training data and algorithms for bias. Crossan emphasised that these ethics and compliance considerations should be interwoven throughout the process of developing an AI strategy.

“You have to be thinking about, as an enterprise … implementing AI, what are the controls that you’re going to need?” she said. “And that starts to help you plan a bit for the regulation so that you’re doing it together. You’re not doing all of this experimentation with AI and then realising, ‘Oh, now we need to think about the controls.’ You do it at the same time.”

Safety and ethics can also be another reason to look at smaller, more narrowly tailored models, Luke pointed out. “These smaller, tuned, domain-specific models are just far less capable than the really big ones — and we want that,” he said. “They’re less likely to be able to output something that you don’t want because they’re just not capable of as many things.”

10. Evolving AI regulation

Unsurprisingly, given these ethics and security concerns, 2024 is shaping up to be a pivotal year for AI regulation, in the UK, EU, and US, with laws, policies and industry frameworks rapidly evolving in the U.S. and globally. Organisations will need to stay informed and adaptable in the coming year, as shifting compliance requirements could have significant implications for global operations and AI development strategies.

The EU’s AI Act, on which members of the EU’s Parliament and Council recently reached a provisional agreement, represents the world’s first comprehensive AI law. If adopted, it would ban certain uses of AI, impose obligations for developers of high-risk AI systems and require transparency from companies using generative AI, with non-compliance potentially resulting in multimillion-dollar fines. And it’s not just new legislation that could have an effect in 2024.

“Interestingly enough, the regulatory issue that I see could have the biggest impact is GDPR — good old-fashioned GDPR — because of the need for rectification and erasure, the right to be forgotten, with public large language models,” Crossan said. “How do you control that when they’re learning from massive amounts of data, and how can you assure that you’ve been forgotten?”

Together with the GDPR, the AI Act could position the EU as a global AI regulator, potentially influencing AI use and development standards worldwide. “They’re certainly ahead of where we are in the U.S. from an AI regulatory perspective,” Crossan said.

The U.S. doesn’t yet have comprehensive federal legislation comparable to the EU’s AI Act, but experts encourage organisations not to wait to think about compliance until formal requirements are in force. At EY, for example, “we’re engaging with our clients to get ahead of it,” Barrington said. Otherwise, businesses could find themselves playing catch-up when regulations do come into effect.

Beyond the ripple effects of European policy, recent activity in the U.S. executive branch also suggests how AI regulation could play out stateside. President Joe Biden’s October executive order implemented new mandates, such as requiring AI developers to share safety test results with the U.S. government and imposing restrictions to protect against the risks of AI in engineering dangerous biological materials. Various federal agencies have also issued guidance targeting specific sectors, such as NIST’s AI Risk Management Framework and the Federal Trade Commission’s statement warning businesses against making false claims about their products’ AI use.

Further complicating matters, 2024 is an election year in the U.S., and the current slate of presidential candidates shows a wide range of positions on tech policy questions. A new administration could theoretically change the executive branch’s approach to AI oversight by reversing or revising Biden’s executive order and nonbinding agency guidance.