Generative AI and other foundation models are changing the AI game, taking assistive technology to a new level, reducing application development time, and bringing powerful capabilities to nontechnical users.

For many of us, entering one prompt into ChatGPT, developed by OpenAI, was all it took to see the power of generative AI. In the first five days of its release, more than a million users logged into the platform to experience it for themselves. OpenAI’s servers can barely keep up with demand, regularly flashing a message that users need to return later when server capacity frees up.

Products like ChatGPT and GitHub Copilot, as well as the underlying AI models that power such systems (Stable Diffusion, DALL·E 2, GPT-3, to name a few), are taking technology into realms once thought to be reserved for humans. With generative AI, computers can now arguably exhibit creativity. They can produce original content in response to queries, drawing from data they’ve ingested and interactions with users. They can develop blogs, sketch package designs, write computer code, or even theorise on the reason for a production error.

This latest class of generative AI systems has emerged from foundation models—large-scale, deep learning models trained on massive, broad, unstructured data sets (such as text and images) that cover many topics. Developers can adapt the models for a wide range of use cases, with little fine-tuning required for each task.

For example, GPT-3.5, the foundation model underlying ChatGPT, has also been used to translate text, and scientists used an earlier version of GPT to create novel protein sequences. In this way, the power of these capabilities is accessible to all, including developers who lack specialised machine learning skills and, in some cases, people with no technical background. Using foundation models can also reduce the time for developing new AI applications to a level rarely possible before.

Generative AI promises to make this year one of the most exciting years yet for AI. But as with every new technology, business leaders must proceed with eyes wide open, because the technology today presents many ethical and practical challenges.

Pushing AI tools further into human realms

More than a decade ago, McKinsey wrote an article in which we sorted economic activity into three buckets—production, transactions, and interactions—and examined the extent to which technology had made inroads into each. Machines and factory technologies transformed production by augmenting and automating human labour during the Industrial Revolution more than 100 years ago, and AI has further amped up efficiencies on the manufacturing floor. Transactions have undergone many technological iterations over approximately the same time frame, including most recently digitisation and, frequently, automation.

Until recently, interaction labour, such as customer service, has experienced the least mature technological interventions. Generative AI is set to change that by undertaking interaction labour in a way that approximates human behaviour closely and, in some cases, imperceptibly. That’s not to say these tools are intended to work without human input and intervention. In many cases, they are most powerful in combination with humans, augmenting their capabilities and enabling them to get work done faster and better.

Generative AI is also pushing technology into a realm thought to be unique to the human mind: creativity. The technology leverages its inputs (the data it has ingested and a user prompt) and experiences (interactions with users that help it “learn” new information and what’s correct/incorrect) to generate entirely new content. While dinner table debates will rage for the foreseeable future on whether this truly equates to creativity, most would likely agree that these tools stand to unleash more creativity into the world by prompting humans with starter ideas.

Ai tools business uses abound

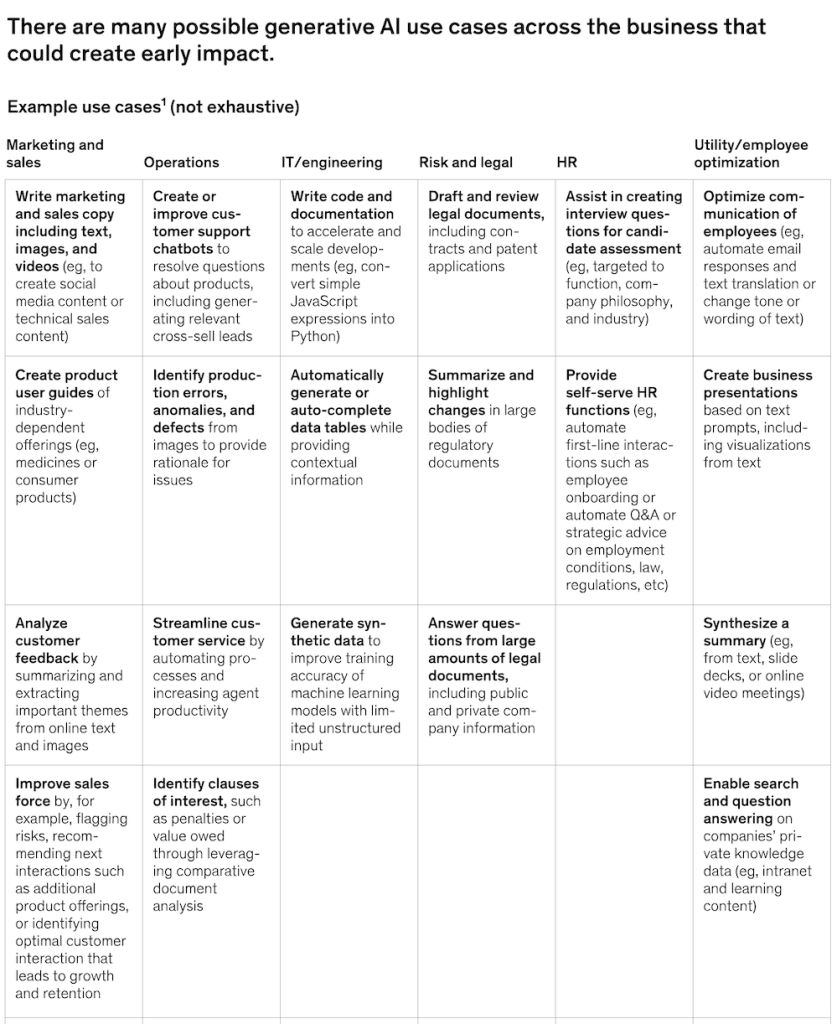

These models are in the early days of scaling, but we’ve started seeing the first batch of applications across functions, including the following (exhibit):

- Marketing and sales— like the amazingly advanced e-commerce ROI machine that is predictive personalisation, social media, and technical sales content (including text, images, and video); creating assistants aligned to specific businesses, such as retail

- Operations—generating task lists for efficient execution of a given activity

- IT/engineering—writing, documenting, and reviewing code

- Risk and legal—answering complex questions, pulling from vast amounts of legal documentation, and drafting and reviewing annual reports

- R&D—accelerating drug discovery through better understanding of diseases and discovery of chemical structures

Excitement for AI tools is warranted, but caution is required

The awe-inspiring results of generative AI might make it seem like a ready-set-go technology, but that’s not the case. Its nascency requires executives to proceed with an abundance of caution. Technologists are still working out the kinks, and plenty of practical and ethical issues remain open. Here are just a few:

- Like humans, generative AI can be wrong. ChatGPT, for example, sometimes “hallucinates,” meaning it confidently generates entirely inaccurate information in response to a user question and has no built-in mechanism to signal this to the user or challenge the result. For example, we have observed instances when the tool was asked to create a short bio and it generated several incorrect facts for the person, such as listing the wrong educational institution.

- Filters are not yet effective enough to catch inappropriate content. Users of an image-generating application that can create avatars from a person’s photo received avatar options from the system that portrayed them nude, even though they had input appropriate photos of themselves.

- Systemic biases still need to be addressed. These systems draw from massive amounts of data that might include unwanted biases.

- Individual company norms and values aren’t reflected. Companies will need to adapt the technology to incorporate their culture and values, an exercise that requires technical expertise and computing power beyond what some companies may have ready access to.

- Intellectual property questions are up for debate. When a generative AI model brings forward a new product design or idea based on a user prompt, who can lay claim to it? What happens when it plagiarizes a source based on its training data?

Initial steps for executives

In companies considering generative AI, executives will want to quickly identify the parts of their business where the technology could have the most immediate impact and implement a mechanism to monitor it, given that it is expected to evolve quickly. A no-regrets move is to assemble a cross-functional team, including data science practitioners, legal experts, and functional business leaders, to think through basic questions, such as these:

- Where might the technology aid or disrupt our industry and/or our business’s value chain?

- What are our policies and posture? For example, are we watchfully waiting to see how the technology evolves, investing in pilots, or looking to build a new business? Should the posture vary across areas of the business?

- Given the limitations of the models, what are our criteria for selecting use cases to target?

- How do we pursue building an effective ecosystem of partners, communities, and platforms?

- What legal and community standards should these models adhere to so we can maintain trust with our stakeholders?

Meanwhile, it’s essential to encourage thoughtful innovation across the organization, standing up guardrails along with sandboxed environments for experimentation, many of which are readily available via the cloud, with more likely on the horizon.